👋 Hello @luka@yarn.girlonthemoon.xyz, welcome to yarn, a Yarn.social Pod! To get started you may want to check out the pod’s Discover feed to find users to follow and interact with. To follow new users, use the ⨁ Follow button on their profile page or use the Follow form and enter a Twtxt URL. You may also find other feeds of interest via Feeds. Welcome! 🤗

@lyse@lyse.isobeef.org Commercial forest, I guess? (Are there any other forests?)

@lyse@lyse.isobeef.org I had that as my avatar/userprofile pic at work for a few years. 😆

@lyse@lyse.isobeef.org Luckily, yeah. Happens every now and then. It’s usually not even worth reporting, they often fix it in 30-90 minutes anyway.

@xuu@txt.sour.is Yeah, it will be delayed. Oh well. That’s just the way it is. :-)

@movq@www.uninformativ.de Hahaha, that filename! :-D 100 times better than I could ever play.

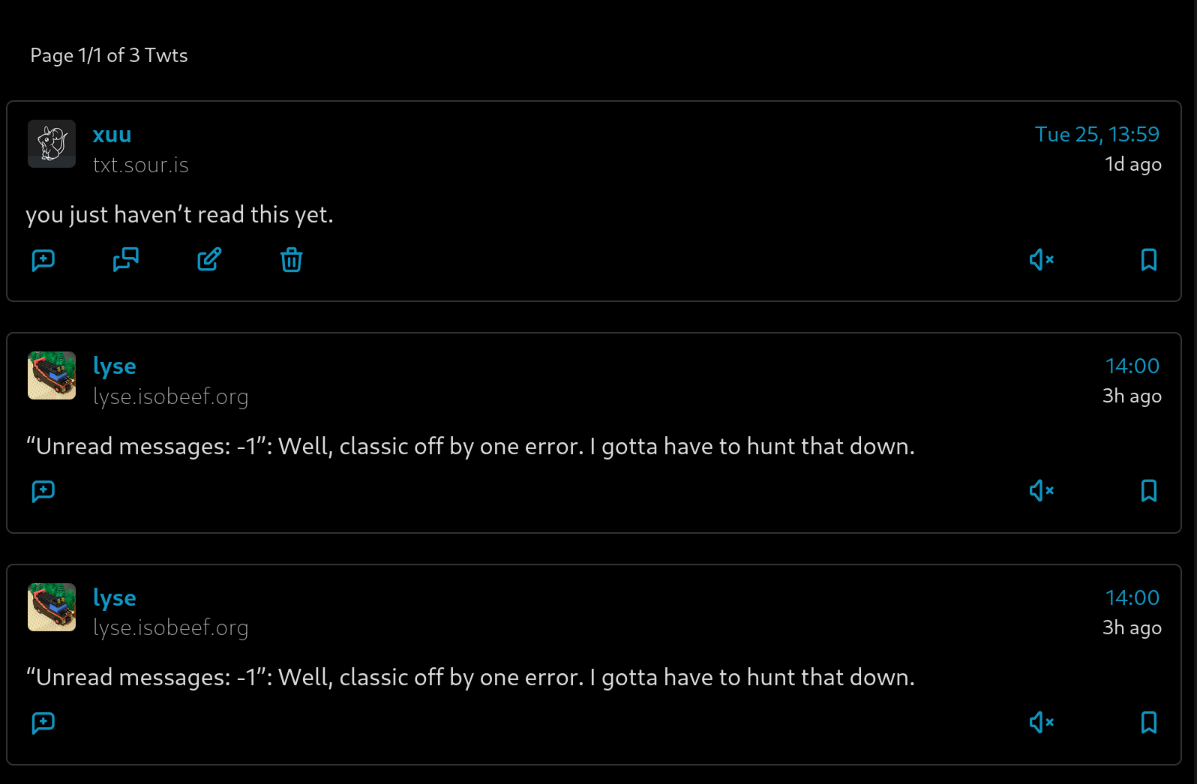

@xuu@txt.sour.is If the unread counter becomes negative, wouldn’t that mean I have that many more read messages? :-D

@bender@twtxt.net You’re spot on, it’s important to not introduce classical bugs!

@movq@www.uninformativ.de Oh dear. :-( Have they fixed it?

@prologic@twtxt.net @movq@www.uninformativ.de I had a t-shirt with this one or the other decade ago. :-)

@bender@twtxt.net thinked about Gemini protocol. Why corporations shit this name with cryptocurrency and LLMs?

@xuu@txt.sour.is like feeds+bridgy.fed? Will be happy anyway

@bender@twtxt.net I taught the whole ecosystem 😁

@prologic@twtxt.net @eapl.me@eapl.me The question I was asked the most was: How do I discover people?

Someone came up with a fantastic idea, instead of adding the new twt at the end of the feed, do it at the beginning. So you can paginate by cutting the request every few lines.

@doesnm@doesnm.p.psf.lt haha its not coming back. he talked of a stand alone thing like feeds. but not in yarnd

hmm @prologic@twtxt.net how did replying to lyse double up here?

@movq@www.uninformativ.de That’s not very retrocomputing!

about:compat in Firefox.

@lyse@lyse.isobeef.org I remember WebKit having a similar list, but I can’t find it right now … 🙈

@prologic@twtxt.net In all seriousness: Don’t worry, I’m not going to host some Fediverse thingy at the moment, probably never will. 😅

But I do use it quite a lot. Although, I don’t really use it as a social network (as in: following people). I follow some tags like #retrocomputing, which fills my timeline with interesting content. If there was a traditional web forum or mailing list or even a usenet group that covered this topic, I’d use that instead. But that’s all (mostly) dead by now. ☹️

@movq@www.uninformativ.de I see, fair point, yeah.

about:compat in Firefox.

@movq@www.uninformativ.de Yikes! I didn’t know about about:compat. Crazy!

@xuu@txt.sour.is Wow, that’s a giant graveyard. In my new database I have 16,428 messages as of now. Archive feed support is not yet available, so it’s just the sum of all the 36 main feeds.

@bender@twtxt.net That … was better than expected. 😂

@prologic@twtxt.net Gemini has an answer for you:

This is a conversation thread from a twtxt network, detailing a user’s (movq) frustration with the Mastodon “export data” feature and their consideration of self-hosting a fediverse alternative. Here’s a summary:

- movq’s initial issue:

- movq is concerned about the volatility of their data on their current Mastodon instance due to a broken “export data” feature.

- They contacted the admins, but the issue remains unresolved.

- This led them to contemplate self-hosting.

- movq is concerned about the volatility of their data on their current Mastodon instance due to a broken “export data” feature.

- Alternative fediverse software suggestions:

- kat suggests gotosocial as a lightweight alternative to Mastodon.

- movq agrees, and also mentions snac as a potential option.

- kat suggests gotosocial as a lightweight alternative to Mastodon.

- movq’s change of heart:

- movq ultimately decides that self-hosting any fediverse software, besides twtxt, is too much effort.

- movq ultimately decides that self-hosting any fediverse software, besides twtxt, is too much effort.

- Resolution and compromise:

- The Mastodon admins attribute the export failure to the size of movq’s account.

- movq decides to set their Mastodon account to auto-delete posts after approximately 180 days to manage data size.

- Movq also mentions that they use auto-expiring links on twtxt to reduce data storage.

- The Mastodon admins attribute the export failure to the size of movq’s account.

@movq@www.uninformativ.de 600MiB is nothing. That instance must be running on a reduced power machine and, perhaps, has too many users. Have you considered starting afresh? That’s what I have done (when it comes to the Fediverse), four times! :-D

@lyse@lyse.isobeef.org for a brief moment I was confused, and puzzled, on how were you able to count read statuses, and messages on cache, with such high precision. Then I remembered you are using German numerical notation. LOL.

@lyse@lyse.isobeef.org @bender@twtxt.net It already is a tiling window manager, but some windows can’t be tiled in a meaningful way. I admit that I’m mostly thinking about QEMU or Wine here: They run at a fixed size and can’t be tiled, but I still want to put them in “full screen” mode (i.e., hide anything else).

@movq@www.uninformativ.de let’s host yarnd! Or maybe wait until @prologic@twtxt.net return activitypub support which deleted in this commit

tt reimplementation that I already followed with the old Python tt. Previously, I just had a few feeds for testing purposes in my new config. While transfering, I "dropped" heaps of feeds that appeared to be inactive.

Thanks, @movq@www.uninformativ.de!

My backing SQLite database with indices is 8.7 MiB in size right now.

The twtxt cache is 7.6 MiB, it uses Python’s pickle module. And next to it there is a 16.0 MiB second database with all the read statuses for the old tt. Wow, super inefficient, it shouldn’t contain anything else, it’s a giant, pickled {"$hash": {"read": True/False}, …}. What the heck, why is it so big?! O_o

@movq@www.uninformativ.de You could also just use a tiling window manager. :-) As a bonus, it doesn’t waste dead space, the window utilizes the entire screen. To also get rid of panels and stuff, put the window in fullscreen mode.

@kat@yarn.girlonthemoon.xyz I have just opened the GIMP bug tracker (hosted at gitlab.gnome.org) and, I kid you not, they have deployed Anubis in front of it:

Oof.

tt reimplementation that I already followed with the old Python tt. Previously, I just had a few feeds for testing purposes in my new config. While transfering, I "dropped" heaps of feeds that appeared to be inactive.

@lyse@lyse.isobeef.org I’m glad to hear that! Yay for more clients. 😊

@lyse@lyse.isobeef.org Interesting, thanks for that list. 🤔

@david@collantes.us @prologic@twtxt.net Sorry! https://cascii.app/

@lyse@lyse.isobeef.org Bad boy! 😂 Remember, it is an extension

@movq@www.uninformativ.de Yeah, most of the graphical applications are actually KDE programs:

- KMail – e-mail client

- Okular – PDF viewer

- Gwenview – image viewer

- Dolphin – file browser

- KWallet – password manager (I want to check out

passone day. The most annoying thing is that when I copy a password, it says that the password has been modified and asks me whether I want to save the changes. I never do, because the password is still the same. I don’t get it.)

- KPatience – card game

- Kdenlive – video editor

- Kleopatra – certificate manager

Qt:

- VLC – video player

- Psi – Jabber client (I happily used Kopete in the past, but that is not supported anymore or so. I don’t remember.)

- sqlitebrowser – SQLite browser

Gtk:

- Firefox – web browser

- Quod Libet – music player (I should look for a better alternative. Can’t remember why I had to move away from Amarok, was it dead? There was a fork Clementine or so, but I had to drop that for some unknown reason, too.)

- Audacity – audio editor

- GIMP – image editor

These are the things that are open right now or that I could think of. Most other stuff I actually do in the terminal.

In the past™, I used the Python KDE4 bindings. That was really nice. I could pass most stuff directly in the constructor and didn’t have to call gazillions of setters improving the experience significantly. If I ever wanted to do GUI programming again, I’d definitely go that route. There are also great Qt bindings for Python if one wanted to avoid the KDE stuff on top. The vast majority I do for myself, though, is either CLI or maybe TUI. A few web shit things, but no GUIs anymore. :-)

Although, most software I use is decentish in that regard.

Is that because you mostly use Qt programs? 🤔

I wish Qt had a C API. Programming in C++ is pain. 😢

@movq@www.uninformativ.de Oh, right, a type would be good to have! :-D

@movq@www.uninformativ.de Where can I join your club? Although, most software I use is decentish in that regard.

I just noted today that JetBrains improv^Wcompletely fucked up their new commit dialog. There’s no diff anymore where I would also be able to select which changes to stage. I guess from now on I’m going to exclusively commit from only the shell. No bloody git integration anymore. >:-( This is so useless now, unbelievable.

@doesnm@doesnm.p.psf.lt 💯 👏👏👏👏👏👏

@lyse@lyse.isobeef.org (I think of pointers as “memory location + type”, but I have done so much C and Assembler by now that the whole thing feels almost trivial to me. And I would have trouble explaining these concepts, I guess. 😅 Maybe I’ll cover this topic with our new Azubis/trainees some day …)

@prologic@twtxt.net What is “ciwtuau”? I don’t understand, sorry haha

@prologic@twtxt.net So it seems!

yes @lyse@lyse.isobeef.org 😅

@kat@yarn.girlonthemoon.xyz Pointers can be a bit tricky. I know it took me also quite some time to wrap my head around them. Let my try to explain. It’s a pretty simple, yet very powerful concept with many facets to it.

A pointer is an indirection. At a lower level, when you have some chunk of memory, you can have some actual values sitting in there, ready for direct use. A pointer, on the other hand, points to some other location where to look for the values one’s actually after. Following that pointer is also called dereferencing the pointer.

I can’t come up with a good real-world example, so this poor comparison has to do. It’s a bit like you have a book (the real value that is being pointed to) and an ISBN referencing that book (the pointer). So, instead of sending you all these many pages from that book, I could give you just a small tag containing the ISBN. With that small piece of information, you’re able to locate the book. Probably a copy of that book and that’s where this analogy falls apart.

In contrast to that flawed comparision, it’s actually the other way around. Many different pointers can point to the same value. But there are many books (values) and just one ISBN (pointer).

The pointer’s target might actually be another pointer. You typically then would follow both of them. There are no limits on how long your pointer chains can become.

One important property of pointers is that they can also point into nothingness, signalling a dead end. This is typically called a null pointer. Following such a null pointer calls for big trouble, it typically crashes your program. Hence, you must never follow any null pointer.

Pointers are important for example in linked lists, trees or graphs. Let’s look at a doubly linked list. One entry could be a triple consisting of (actual value, pointer to next entry, pointer to previous entry).

_______________________

/ ________\_______________

↓ ↓ | \

+---+---+---+ +---+---+-|-+ +---+---+-|-+

| 7 | n | x | | 23| n | p | | 42| x | p |

+---+-|-+---+ +---+-|-+---+ +---+---+---+

| ↑ | ↑

\_______/ \_______/

The “x” indicates a null pointer. So, the first element of the doubly linked list with value 7 does not have any reference to a previous element. The same is true for the next element pointer in the last element with value 42.

In the middle element with value 23, both pointers to the next (labeled “n”) and previous (labeled “p”) elements are pointing to the respective elements.

You can also see that the middle element is pointed to by two pointers. By the “next” pointer in the first element and the “previous” pointer in the last element.

That’s it for now. There are heaps ;-) more things to tell about pointers. But it might help you a tiny bit.

@andros@twtxt.andros.dev @prologic@twtxt.net Exactly. The screenshots of the last few days show it in action. But I do not consider it ready for the world yet. @doesnm@doesnm.p.psf.lt appears to have a high pain tolerance, though. :-)

@andros@twtxt.andros.dev You use your real name as login name, too?

@prologic@twtxt.net I see this with the scouts. Luckily, not at work. But at work, I’m surrounded by techies.

@movq@www.uninformativ.de Oh my goodness! I’m so glad that I don’t have to deal with that in my family. But yeah, I guess you’re onto something with your theory. This article is also quite horrific. O_o