Honestly… not much. Have abandon two projects (both private) on Golang and one related to cryptography. My mostly languages are Python and Javascript (also can PHP). After writing code on Go i spend same time on fixing dumb errors

Insecure Robot Vacuums From Chinese Company Deebot Collect Photos and Audio to Train Their AI

Long-time Slashdot reader schwit1 shared this report from Australia’s public broadcaster ABC:

Ecovacs robot vacuums, which have been found to suffer from critical cybersecurity flaws, are collecting photos, videos and voice recordings — taken inside customers’ houses — to train the company’ … ⌘ Read more

Grand Canyon Rim To Rim: 27.90 miles, 00:28:36 average pace, 13:17:46 duration

grand canyon rim-to-rim with kelly and craig. it was a blast! a lot of firsts: crazy elevation, head lamps, poles. i had a really good time and then we started to encounter some elevation. definitely could feel the altitude as soon as there was an incline. then after so many large steps up my quads started to feel it. at one point everything seized up and i fell down. luckily craig jumped in my leg and started stretching it out which helped a lot. the rest of it from there it was slow going but only once the large steps were sparse we cruised ahead. definitely would do it again.

#running #race

nixbsd is taking a long time to build, but that’s expected. i guess a fast machine can do it in just 8h. might be about time to get my binary cache setup. my machine can only handle max-jobs=2 :(

time to give nixbsd a spin

@prologic@twtxt.net I got tricked tow times in row 🥲

@prologic@twtxt.net I think printf is a more portable option than echo -e for interpreting \t as tab. E.g. printf ‘%s\t%s\t%s’ “$url” “$time” “$text”. In general I always prefer printf over echo for anything non-trivial in unix shell scripts. See last paragraph of https://en.wikipedia.org/wiki/Echo_(command)#History

Had a great time at the Duisburg zoo yesterday including the amazing dolphin show

I believe I’d missed an f:

~/src/jenny $ git diff

diff --git a/jenny b/jenny

index ada8da2..8ae9a06 100755

--- a/jenny

+++ b/jenny

@@ -1194,7 +1194,7 @@ if __name__ == '__main__':

if args.edit:

edit_twt_file(app)

elif args.fetch:

- with DirectoryLock(f'/tmp/jenny-{getuser()}.run'):

+ with DirectoryLock(expanduser(f'~/tmp/jenny-{getuser()}.run')):

retrieve_all(app)

elif args.last_seen:

print('Feeds last seen at (times are local time), oldest first:')

@doesnm@doesnm.p.psf.lt I’ve just given it a try on android/termux and got it to work, I can’t promise it won’t break something else (because i definitely don’t know what I’m doing) but here’s what I broke 😅:

~/src/jenny $ git diff

diff --git a/jenny b/jenny

index ada8da2..8ae9a06 100755

--- a/jenny

+++ b/jenny

@@ -1194,7 +1194,7 @@ if __name__ == '__main__':

if args.edit:

edit_twt_file(app)

elif args.fetch:

- with DirectoryLock(f'/tmp/jenny-{getuser()}.run'):

+ with DirectoryLock(expanduser('~/tmp/jenny-{getuser()}.run')):

retrieve_all(app)

elif args.last_seen:

print('Feeds last seen at (times are local time), oldest first:')

and of course make sure you mkdir ~/tmp

👋 Thanks for joining us on our Sept monthly Yarn.social meetup today y’all 🙇♂️ We had @david@collantes.us @sorenpeter@darch.dk @doesnm@doesnm.p.psf.lt @falsifian@www.falsifian.org and @xuu@txt.sour.is 💪 Nice turn out! (not all at once of course, as we normally run this over 4 hours as we span many time zones!)

Things we talked about:

- Decentralised vs. Distributed

- Use of SHA256 for Twt Hash(es)

- We solved Edits! 🥳

- UUID(s) probably won’t work! (susceptible to sppofing)

- Helped @sorenpeter@darch.dk write some PHP to process/parse

User-Agentand service his feed via a custom PHP script 😅

- @falsifian@www.falsifian.org introduced himself 👌

- Talked about Merkle Trees 🌳

Did I miss anything? 🤔

Yes, that is exactly what I meant. I like that collection and “twtxt v2” feels like a departure.

Maybe there’s an advantage to grouping it into one spec, but IMO that shouldn’t be done at the same time as introducing new untested ideas.

See https://yarn.social (especially this section: https://yarn.social/#self-host) – It really doesn’t get much simpler than this 🤣

Again, I like this existing simplicity. (I would even argue you don’t need the metadata.)

That page says “For the best experience your client should also support some of the Twtxt Extensions…” but it is clear you don’t need to. I would like it to stay that way, and publishing a big long spec and calling it “twtxt v2” feels like a departure from that. (I think the content of the document is valuable; I’m just carping about how it’s being presented.)

More thoughts about changes to twtxt (as if we haven’t had enough thoughts):

- There are lots of great ideas here! Is there a benefit to putting them all into one document? Seems to me this could more easily be a bunch of separate efforts that can progress at their own pace:

1a. Better and longer hashes.

1b. New possibly-controversial ideas like edit: and delete: and location-based references as an alternative to hashes.

1c. Best practices, e.g. Content-Type: text/plain; charset=utf-8

1d. Stuff already described at dev.twtxt.net that doesn’t need any changes.

We won’t know what will and won’t work until we try them. So I’m inclined to think of this as a bunch of draft ideas. Maybe later when we’ve seen it play out it could make sense to define a group of recommended twtxt extensions and give them a name.

Another reason for 1 (above) is: I like the current situation where all you need to get started is these two short and simple documents:

https://twtxt.readthedocs.io/en/latest/user/twtxtfile.html

https://twtxt.readthedocs.io/en/latest/user/discoverability.html

and everything else is an extension for anyone interested. (Deprecating non-UTC times seems reasonable to me, though.) Having a big long “twtxt v2” document seems less inviting to people looking for something simple. (@prologic@twtxt.net you mentioned an anonymous comment “you’ve ruined twtxt” and while I don’t completely agree with that commenter’s sentiment, I would feel like twtxt had lost something if it moved away from having a super-simple core.)All that being said, these are just my opinions, and I’m not doing the work of writing software or drafting proposals. Maybe I will at some point, but until then, if you’re actually implementing things, you’re in charge of what you decide to make, and I’m grateful for the work.

() @xuu@txt.sour.is wrote:

”@bender I am also in camp no edit signals. deletes only breaks the head of a thread. all the replies are unaffected.”

I figure I could also answer every single twtxt like this, so that if the original gets edited, or deleted, at least I don’t sound foolish without knowing exactly what I replied to. 🤭

It Sounds like a good idea! should that be limited to just direct replays or can it be extended to replays to other replays, that way and With just the right amount of chain-replays, we’ll be RRrrrrrevolutionizing the way people Mailing Lists like, in no time! xD

P.S: Just a reminder! I’ve already told you not to mind my twts for the next couple of hours, right!

Finally weekend. Time to relax a bit. And today I finally have some time for my computer in my free time. Wish you all a great weekend! Take care of your self and those around you :)

if twtxt 2 is dropping gemini support, i will probably move on and spend more time on my gemini social zine protocol instead. i think the direction of the protocol is probably fine, but for me web is a tier 2 publishing channel. if the choice is between gemini and http i’m always going to pick gemini. its been a fun ride, but i guess this is where i get off.

scp(1) options.

@mckinley@twtxt.net I mean, yes! I’ve heard a lot of good things about how efficient of a tool it is for backup and all; and I’m willing to spend the time and learn. It’s just that seeing those +400 possible options was a buzz-kill. 🫣 luckily @lyse and @movq shared their most used options!

Starting a couple of new projects (geez where do I find the time?!):

HomeTunnel:

HomeTunnel is a self-hosted solution that combines secure tunneling, proxying, and automation to create your own private cloud. Utilizing Wireguard for VPN, Caddy for reverse proxying, and Traefik for service routing, HomeTunnel allows you to securely expose your home network services (such as Gitea, Poste.io, etc.) to the Internet. With seamless automation and on-demand TLS, HomeTunnel gives you the power to manage your own cloud-like environment with the control and privacy of self-hosting.

CraneOps:

craneops is an open-source operator framework, written in Go, that allows self-hosters to automate the deployment and management of infrastructure and applications. Inspired by Kubernetes operators, CraneOps uses declarative YAML Custom Resource Definitions (CRDs) to manage Docker Swarm deployments on Proxmox VE clusters.

rsync(1) but, whenever I Tab for completion and get this:

@aelaraji@aelaraji.com rsync -zaXAP is what I use all the time. But that’s all – for the rest, I have to consult the manual. 😅

@sorenpeter@darch.dk Points 2 & 3 aren’t really applicable here in the discussion of the threading model really I’m afraid. WebMentions is completely orthogonal to the discussion. Further, no-one that uses Twtxt really uses WebMentions, whilst yarnd supports the use of WebMentions, it’s very rarely used in practise (if ever) – In fact I should just drop the feature entirely.

The use of WebSub OTOH is far more useful and is used by every single yarnd pod everywhere (no that there’s that many around these days) to subscribe to feed updates in ~near real-time without having the poll constantly.

rsync(1) but, whenever I Tab for completion and get this:

@aelaraji@aelaraji.com Rsync has a ton of options and I probably still haven’t scratched the surface, but I was able to memorize the options I actually need for day-to-day work in a relatively short time. I guess I’m the opposite of you, because I don’t know any scp(1) options.

Been trying to get acquainted with rsync(1) but, whenever I Tab for completion and get this:

λ ~/ rsync –

zsh: do you wish to see all 484 possibilities (162 lines)?

I’m like: Nope! a scp -rpCq ... or whatever option salad will do just fine. 😅 [Insert: “Ain’t nobody got time fo’that!” Meme.]

@prologic@twtxt.net Thanks for writing that up!

I hope it can remain a living document (or sequence of draft revisions) for a good long time while we figure out how this stuff works in practice.

I am not sure how I feel about all this being done at once, vs. letting conventions arise.

For example, even today I could reply to twt abc1234 with “(#abc1234) Edit: …” and I think all you humans would understand it as an edit to (#abc1234). Maybe eventually it would become a common enough convention that clients would start to support it explicitly.

Similarly we could just start using 11-digit hashes. We should iron out whether it’s sha256 or whatever but there’s no need get all the other stuff right at the same time.

I have similar thoughts about how some users could try out location-based replies in a backward-compatible way (append the replyto: stuff after the legacy (#hash) style).

However I recognize that I’m not the one implementing this stuff, and it’s less work to just have everything determined up front.

Misc comments (I haven’t read the whole thing):

Did you mean to make hashes hexadecimal? You lose 11 bits that way compared to base32. I’d suggest gaining 11 bits with base64 instead.

“Clients MUST preserve the original hash” — do you mean they MUST preserve the original twt?

Thanks for phrasing the bit about deletions so neutrally.

I don’t like the MUST in “Clients MUST follow the chain of reply-to references…”. If someone writes a client as a 40-line shell script that requires the user to piece together the threading themselves, IMO we shouldn’t declare the client non-conforming just because they didn’t get to all the bells and whistles.

Similarly I don’t like the MUST for user agents. For one thing, you might want to fetch a feed without revealing your identty. Also, it raises the bar for a minimal implementation (I’m again thinking again of the 40-line shell script).

For “who follows” lists: why must the long, random tokens be only valid for a limited time? Do you have a scenario in mind where they could leak?

Why can’t feeds be served over HTTP/1.0? Again, thinking about simple software. I recently tried implementing HTTP/1.1 and it wasn’t too bad, but 1.0 would have been slightly simpler.

Why get into the nitty-gritty about caching headers? This seems like generic advice for HTTP servers and clients.

I’m a little sad about other protocols being not recommended.

I don’t know how I feel about including markdown. I don’t mind too much that yarn users emit twts full of markdown, but I’m more of a plain text kind of person. Also it adds to the length. I wonder if putting a separate document would make more sense; that would also help with the length.

Been thinking about it for the last couple of days and I would say we can make do with the shorter (#<DATETIME URL>)since it mirrors the twt-mention syntax and simply points to the OP as the topic identified by the time of posting it. Do we really need and (edit:...)and (delete:...) also?

@aelaraji@aelaraji.com This is one of the reasons why yarnd has a couple of settings with some sensible/sane defaults:

I could already imagine a couple of extreme cases where, somewhere, in this peaceful world one’s exercise of freedom of speech could get them in Real trouble (if not danger) if found out, it wouldn’t necessarily have to involve something to do with Law or legal authorities. So, If someone asks, and maybe fearing fearing for… let’s just say ‘Their well being’, would it heart if a pod just purged their content if it’s serving it publicly (maybe relay the info to other pods) and call it a day? It doesn’t have to be about some law/convention somewhere … 🤷 I know! Too extreme, but I’ve seen news of people who’d gone to jail or got their lives ruined for as little as a silly joke. And it doesn’t even have to be about any of this.

There are two settings:

$ ./yarnd --help 2>&1 | grep max-cache

--max-cache-fetchers int set maximum numnber of fetchers to use for feed cache updates (default 10)

-I, --max-cache-items int maximum cache items (per feed source) of cached twts in memory (default 150)

-C, --max-cache-ttl duration maximum cache ttl (time-to-live) of cached twts in memory (default 336h0m0s)

So yarnd pods by default are designed to only keep Twts around publicly visible on either the anonymous Frontpage or Discover View or your Timeline or the feed’s Timeline for up to 2 weeks with a maximum of 150 items, whichever get exceeded first. Any Twts over this are considered “old” and drop off the active cache.

It’s a feature that my old man @off_grid_living@twtxt.net was very strongly in support of, as was I back in the day of yarnd’s design (nothing particularly to do with Twtxt per se) that I’ve to this day stuck by – Even though there are some 😉 that have different views on this 🤣

@david@collantes.us Thanks, that’s good feedback to have. I wonder to what extent this already exists in registry servers and yarn pods. I haven’t really tried digging into the past in either one.

How interested would you be in changes in metadata and other comments in the feeds? I’m thinking of just permanently saving every version of each twtxt file that gets pulled, not just the twts. It wouldn’t be hard to do (though presenting the information in a sensible way is another matter). Compression should make storage a non-issue unless someone does something weird with their feed like shuffle the comments around every time I fetch it.

@prologic@twtxt.net what time in UTC?

I wrote some code to try out non-hash reply subjects formatted as (replyto ), while keeping the ability to use the existing hash style.

I don’t think we need to decide all at once. If clients add support for a new method then people can use it if they like. The downside of course is that this costs developer time, so I decided to invest a few hours of my own time into a proof of concept.

With apologies to @movq@www.uninformativ.de for corrupting jenny’s beautiful code. I don’t write this expecting you to incorporate the patch, because it does complicate things and might not be a direction you want to go in. But if you like any part of this approach feel free to use bits of it; I release the patch under jenny’s current LICENCE.

Supporting both kinds of reply in jenny was complicated because each email can only have one Message-Id, and because it’s possible the target twt will not be seen until after the twt referencing it. The following patch uses an sqlite database to keep track of known (url, timestamp) pairs, as well as a separate table of (url, timestamp) pairs that haven’t been seen yet but are wanted. When one of those “wanted” twts is finally seen, the mail file gets rewritten to include the appropriate In-Reply-To header.

Patch based on jenny commit 73a5ea81.

https://www.falsifian.org/a/oDtr/patch0.txt

Not implemented:

- Composing twts using the (replyto …) format.

- Probably other important things I’m forgetting.

I’m bad with faces, I know that. But I’m having a really hard time recognizing Linus in this video:

https://www.youtube.com/watch?v=4WCTGycBceg

Basically a different person to me. Is it just me or has he really changed that much? 😳

Can I get someone like maybe @xuu@txt.sour.is or @abucci@anthony.buc.ci or even @eldersnake@we.loveprivacy.club – If you have some spare time – to test this yarnd PR that upgrades the Bitcask dependency for its internal database to v2? 🙏

VERY IMPORTANT If you do; Please Please Please backup your yarn.db database first! 😅 Heaven knows I don’t want to be responsible for fucking up a production database here or there 🤣

@quark@ferengi.one Do you mean something like this?

$ ./yarnc debug ~/Public/twtxt.txt | tail -n 1

kp4zitq 2024-09-08T02:08:45Z (#wsdbfna) @<aelaraji https://aelaraji.com/twtxt.txt> My work has this thing called "compressed work", where you can **buy** extra time off (_as much as 4 additional weeks_) per year. It comes out of your pay though, so it's not exactly a 4-day work week but it could be useful, just haven't tired it yet as I'm not entirely sure how it'll affect my net pay

main.go (but it can be done on a template now, so no reason to touch the code):

@lyse@lyse.isobeef.org fully agree. I have never been a fan of relative times to begin with, so that one will go away, foh sho! :-D

Woot, yes! It works perfectly. By the time you see my twtxt, it is already at the main Ferengi.one website.

OK, OK, the third time is the charm. Maybe?

@aelaraji@aelaraji.com this is my change on main.go (but it can be done on a template now, so no reason to touch the code):

<time class="dt-published" datetime="{{ $twt.Created | date "2006-01-02T15:04:05Z07:00" }}">

{{ $twt.Created | date "2006-01-02 15:04:05 MST" }}

</time>

See https://ferengi.one. I am going to further customise things, but that’s a start.

@bender@twtxt.net LOL normally things (in the vanilla template) render like <time class="dt-published" datetime="2024-09-17T15:05:19+01:00"> 2024-09-17 14:05:19 +0000 UTC+0000 </time> the datetime=... atribute is in my local time UTC+1 then the text within the tag is in UTC+0

The thing is, I’ve been poking at the template as well, but nothing changes. I literally whole portionsm added in lorem text just to see if it would do anything, then twtxt2html -T ./layout.html <link to twtxt file> | less shows same thing as before! nothing changes. LOL I’m not sure I’m going at it the right way.

@movq@www.uninformativ.de I didn’t run the command as you recommended, but, I wiped things once more, and ran jenny -f, and this time got:

david@arrakis:~$ jenny -f

Fetching archived feed https://anthony.buc.ci/user/abucci/twtxt.txt/1 (configured as abucci, https://anthony.buc.ci/user/abucci/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2024-04.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://darch.dk/twtxt-archive.txt (configured as soren, https://darch.dk/twtxt.txt)

Fetching archived feed https://www.uninformativ.de/twtxt-old_2024-04-21_6v47cua.txt (configured as movq, https://www.uninformativ.de/twtxt.txt)

Fetching archived feed https://twtxt.net/user/prologic/twtxt.txt/1 (configured as prologic, https://twtxt.net/user/prologic/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2024-03.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://www.uninformativ.de/twtxt-old_2022-12-21_2us6qbq.txt (configured as movq, https://www.uninformativ.de/twtxt.txt)

Fetching archived feed https://twtxt.net/user/prologic/twtxt.txt/2 (configured as prologic, https://twtxt.net/user/prologic/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2024-02.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://www.uninformativ.de/twtxt-old_2022-01-14_ew5gzca.txt (configured as movq, https://www.uninformativ.de/twtxt.txt)

Fetching archived feed https://twtxt.net/user/prologic/twtxt.txt/3 (configured as prologic, https://twtxt.net/user/prologic/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2024-01.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://www.uninformativ.de/twtxt-old_2021-12-23_f6y65bq.txt (configured as movq, https://www.uninformativ.de/twtxt.txt)

Fetching archived feed https://twtxt.net/user/prologic/twtxt.txt/4 (configured as prologic, https://twtxt.net/user/prologic/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2023-12.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://www.uninformativ.de/twtxt-old_2021-12-04_e4x7yba.txt (configured as movq, https://www.uninformativ.de/twtxt.txt)

Fetching archived feed https://twtxt.net/user/prologic/twtxt.txt/5 (configured as prologic, https://twtxt.net/user/prologic/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2023-11.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://www.uninformativ.de/twtxt-old_2021-11-18_42tjxba.txt (configured as movq, https://www.uninformativ.de/twtxt.txt)

Fetching archived feed https://twtxt.net/user/prologic/twtxt.txt/6 (configured as prologic, https://twtxt.net/user/prologic/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2023-10.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://www.uninformativ.de/twtxt-old_2021-11-08_i2wnvaa.txt (configured as movq, https://www.uninformativ.de/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2023-09.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://www.uninformativ.de/twtxt-old_2021-10-23_kvwn5oa.txt (configured as movq, https://www.uninformativ.de/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2023-08.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://www.uninformativ.de/twtxt-old_2021-10-11_mljudaa.txt (configured as movq, https://www.uninformativ.de/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2023-07.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://www.uninformativ.de/twtxt-old_2021-09-22_5mkqwua.txt (configured as movq, https://www.uninformativ.de/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2023-06.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://www.uninformativ.de/twtxt-old_2021-07-27_xcnzmlq.txt (configured as movq, https://www.uninformativ.de/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2023-05.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://www.uninformativ.de/twtxt-old_2021-06-16_mtedqya.txt (configured as movq, https://www.uninformativ.de/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2023-04.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://www.uninformativ.de/twtxt-old_2021-04-29_z7lvzja.txt (configured as movq, https://www.uninformativ.de/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2023-03.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://www.uninformativ.de/twtxt-old_2021-03-19_xjabvhq.txt (configured as movq, https://www.uninformativ.de/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2023-02.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://www.uninformativ.de/twtxt-old_2021-02-24_te4a6oa.txt (configured as movq, https://www.uninformativ.de/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2023-01.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://www.uninformativ.de/twtxt-old_2021-01-26_qxgigma.txt (configured as movq, https://www.uninformativ.de/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2022-12.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://www.uninformativ.de/twtxt-old_2020-12-13_igfnala.txt (configured as movq, https://www.uninformativ.de/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2022-11.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2022-10.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2022-09.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2022-08.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2022-07.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2022-06.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2022-05.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2022-04.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2022-03.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2022-02.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2022-01.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2021-12.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2021-11.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2021-10.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2021-09.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2021-08.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2021-07.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2021-06.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2021-05.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2021-04.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2021-03.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2021-02.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2021-01.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2020-12.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Notice that @prologic@twtxt.net’s /6 is there. I found the twtxt then. Kind of odd it didn’t show before.

(#hash;#originalHash) would also work.

Maybe I’m being a bit too purist/minimalistic here. As I said before (in one of the 1372739 posts on this topic – or maybe I didn’t even send that twt, I don’t remember 😅), I never really liked hashes to begin with. They aren’t super hard to implement but they are kind of against the beauty of the original twtxt – because you need special client support for them. It’s not something that you could write manually in your

twtxt.txtfile. With @sorenpeter@darch.dk’s proposal, though, that would be possible.

Tangentially related, I was a bit disappointed to learn that the twt subject extension is now never used except with hashes. Manually-written subjects sounded so beautifully ad-hoc and organic as a way to disambiguate replies. Maybe I’ll try it some time just for fun.

@bender@twtxt.net Now that I’m thinking about it, I could just add in a cron job on my remote machine with: twtxt2html https://domain.ltd/twtxt.txt > /path/to/static_files_dir/ that way I could benefit from the ‘relative time’ portion I’m getting rid of with the -n …

@prologic@twtxt.net I’d be glad to! I’m just taking time to get well acquainted with it’s ins and out before saying something stupid 😅 Like… I’ve just noticed the -n 🫠

i have been using grapheneos for a long time (maybe 7 years now?) and i would fully reccomend it to anyone who is OK with buying a new pixel device to run it on. the install instructions are really easy to follow and can be executed on any device that supports WebUSB https://grapheneos.org/install/web

so it looks like genode has taken some inspiration from nix.. that’s a rabbit-hole for another time https://genode.org/documentation/developer-resources/package_management

Also what are the change that the same human will make two different posts within the same second?!

Just out of curiosity, What would happen someday if I (maybe trolling) edit my twtxt.txt-file manually and switch/switch a couple of twt timestamps, or add in 3 different twts manually with the same time stamp?

@prologic@twtxt.net earlier you suggested extending hashes to 11 characters, but here’s an argument that they should be even longer than that.

Imagine I found this twt one day at https://example.com/twtxt.txt :

2024-09-14T22:00Z Useful backup command: rsync -a “$HOME” /mnt/backup

and I responded with “(#5dgoirqemeq) Thanks for the tip!”. Then I’ve endorsed the twt, but it could latter get changed to

2024-09-14T22:00Z Useful backup command: rm -rf /some_important_directory

which also has an 11-character base32 hash of 5dgoirqemeq. (I’m using the existing hashing method with https://example.com/twtxt.txt as the feed url, but I’m taking 11 characters instead of 7 from the end of the base32 encoding.)

That’s what I meant by “spoofing” in an earlier twt.

I don’t know if preventing this sort of attack should be a goal, but if it is, the number of bits in the hash should be at least two times log2(number of attempts we want to defend against), where the “two times” is because of the birthday paradox.

Side note: current hashes always end with “a” or “q”, which is a bit wasteful. Maybe we should take the first N characters of the base32 encoding instead of the last N.

Code I used for the above example: https://fossil.falsifian.org/misc/file?name=src/twt_collision/find_collision.c

I only needed to compute 43394987 hashes to find it.

HTTPS is supposed to do [verification] anyway.

TLS provides verification that nobody is tampering with or snooping on your connection to a server. It doesn’t, for example, verify that a file downloaded from server A is from the same entity as the one from server B.

I was confused by this response for a while, but now I think I understand what you’re getting at. You are pointing out that with signed feeds, I can verify the authenticity of a feed without accessing the original server, whereas with HTTPS I can’t verify a feed unless I download it myself from the origin server. Is that right?

I.e. if the HTTPS origin server is online and I don’t mind taking the time and bandwidth to contact it, then perhaps signed feeds offer no advantage, but if the origin server might not be online, or I want to download a big archive of lots of feeds at once without contacting each server individually, then I need signed feeds.

feed locations [being] URLs gives some flexibility

It does give flexibility, but perhaps we should have made them URIs instead for even more flexibility. Then, you could use a tag URI,

urn:uuid:*, or a regular old URL if you wanted to. The spec seems to indicate that theurltag should be a working URL that clients can use to find a copy of the feed, optionally at multiple locations. I’m not very familiar with IP{F,N}S but if it ensures you own an identifier forever and that identifier points to a current copy of your feed, it could be a great way to fix it on an individual basis without breaking any specs :)

I’m also not very familiar with IPFS or IPNS.

I haven’t been following the other twts about signatures carefully. I just hope whatever you smart people come up with will be backwards-compatible so it still works if I’m too lazy to change how I publish my feed :-)

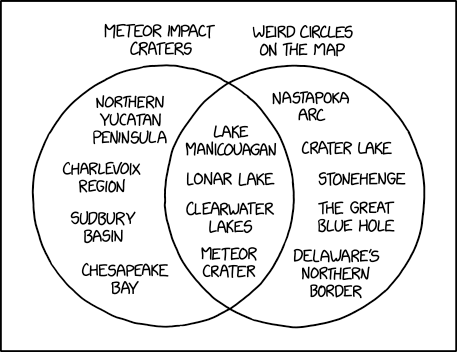

Craters

⌘ Read more

⌘ Read more

@xuu@txt.sour.is Thanks for the link. I found a pdf on one of the authors’ home pages: https://ahmadhassandebugs.github.io/assets/pdf/quic_www24.pdf . I wonder how the protocol was evaluated closer to the time it became a standard, and whether anything has changed. I wonder if network speeds have grown faster than CPU speeds since then. The paper says the performance is around the same below around 600 Mbps.

To be fair, I don’t think QUIC was ever expected to be faster for transferring a single stream of data. I think QUIC is supposed to reduce the impact of a dropped packet by making sure it only affects the stream it’s part of. I imagine QUIC still has that advantage, and this paper is showing the other side of a tradeoff.

# follow_notify = gemini://foo/bar to your feed’s metadata, so that clients who follow you can ping that URL every now and then? How would you even notice that, do you regularly read your gemini logs? 🤔

@movq@www.uninformativ.de Damn! I’m two years late to the discussion 😅 So basically, one could just make a bash script/cron job on the side for pinging non-Http feeds from time to time and the receiving end would get it IF they check their logs.

So this is a great thread. I have been thinking about this too.. and what if we are coming at it from the wrong direction? Identity being tied to a given URL has always been a pain point. If i get a new URL its almost as if i have a new identity because not only am I serving at a new location but all my previous communications are broken because the hashes are all wrong.

What if instead we used this idea of signatures to thread the URLs together into one identity? We keep the URL to Hash in place. Changing that now is basically a no go. But we can create a signature chain that can link identities together. So if i move to a new URL i update the chain hosted by my primary identity to include the new URL. If i have an archived feed that the old URL is now dead, we can point to where it is now hosted and use the current convention of hashing based on the first url:

The signature chain can also be used to rotate to new keys over time. Just sign in a new key or revoke an old one. The prior signatures remain valid within the scope of time the signatures were made and the keys were active.

The signature file can be hosted anywhere as long as it can be fetched by a reasonable protocol. So say we could use a webfinger that directs to the signature file? you have an identity like frank@beans.co that will discover a feed at some URL and a signature chain at another URL. Maybe even include the most recent signing key?

From there the client can auto discover old feeds to link them together into one complete timeline. And the signatures can validate that its all correct.

I like the idea of maybe putting the chain in the feed preamble and keeping the single self contained file.. but wonder if that would cause lots of clutter? The signature chain would be something like a log with what is changing (new key, revoke, add url) and a signature of the change + the previous signature.

# chain: ADDKEY kex14zwrx68cfkg28kjdstvcw4pslazwtgyeueqlg6z7y3f85h29crjsgfmu0w

# sig: BEGIN SALTPACK SIGNED MESSAGE. ...

# chain: ADDURL https://txt.sour.is/user/xuu

# sig: BEGIN SALTPACK SIGNED MESSAGE. ...

# chain: REVKEY kex14zwrx68cfkg28kjdstvcw4pslazwtgyeueqlg6z7y3f85h29crjsgfmu0w

# sig: ...

I wonder if bento has slightly missed the key to being a total genius approach to host management. ok hear me out. each node periodically pulls configuration from a coordination node that hosts a binary cache. the admin may make changes and pre-build them maybe kick off an update task manually if they want, but the point is there’s an automated checkin. for my case, the device I have available for coordination isn’t really capable of hosting a binary cache for any of my other machines. the nix store for my dev machine is larger than the entire disk of the coordinator! and due to the yearly heat my best machine can’t be reliably powered on all the time. so i started thinking to myself, “self, what if instead of having a central coordinator we fetched configuration from a reliable git mirror (maybe git+torrent some day) and consume it as a flake. the source could even be swapped out using a flake registry (so you don’t even have to commit to self-hosting anything other than a json file). then managed hosts only have to be setup to consume the registry and the shared flake (which registers the update agent) and DONE?”