I’m seeing strange lights in the sky. None of my cameras are sensitive enough to make a video.

It’s probably one of two things:

- A ship on the nearby river with a lightshow going. It’s rare but it happens.

- A steap hill nearby, cars driving “upwards”, and since super bright LED lights are normal nowadays, they reflect from the clouds.

Either way, looks fancy.

@doesnm@doesnm.p.psf.lt May I ask which hardware you have? SSD or HDD? How much RAM?

I might be spoiled and very privileged here. Even though my PC is almost 12 years old now, it does have an SSD and tons of RAM (i.e., lots of I/O cache), so starting mutt and opening the mailbox takes about 1-2 seconds here. I hardly even notice it. But I understand that not everybody has fast machines like that. 🫤

Pinellas County - 4 x 5’ (hard) [1’]: 5.00 miles, 00:09:42 average pace, 00:48:26 duration

nothing to note.

#running

1/4 to mean "first out of four".

@bender@twtxt.net I try to avoid editing. I guess I would write 5/4, 6/4, etc, and hopefully my audience would be sympathetic to my failing.

Anyway, I don’t think my eccentric decision to number my twts in the style of other social media platforms is the only context where someone might write ¼ not meaning a quarter. E.g. January 4, to Americans.

I’m happy to keep overthinking this for as long as you are :-P

@bender@twtxt.net @prologic@twtxt.net I’m not exactly asking yarnd to change. If you are okay with the way it displayed my twts, then by all means, leave it as is. I hope you won’t mind if I continue to write things like 1/4 to mean “first out of four”.

What has text/markdown got to do with this? I don’t think Markdown says anything about replacing 1/4 with ¼, or other similar transformations. It’s not needed, because ¼ is already a unicode character that can simply be directly inserted into the text file.

What’s wrong with my original suggestion of doing the transformation before the text hits the twtxt.txt file? @prologic@twtxt.net, I think it would achieve what you are trying to achieve with this content-type thing: if someone writes 1/4 on a yarnd instance or any other client that wants to do this, it would get transformed, and other clients simply wouldn’t do the transformation. Every client that supports displaying unicode characters, including Jenny, would then display ¼ as ¼.

Alternatively, if you prefer yarnd to pretty-print all twts nicely, even ones from simpler clients, that’s fine too and you don’t need to change anything. My 1/4 -> ¼ thing is nothing more than a minor irritation which probably isn’t worth overthinking.

J-1, ou presque pour ceux qui y vont dès cet après-midi! #utopiales2024

@prologic@twtxt.net I’m not a yarnd user, so it doesn’t matter a whole lot to me, but FWIW I’m not especially keen on changing how I format my twts to work around yarnd’s quirks.

I wonder if this kind of postprocessing would fit better between composing (via yarnd’s UI) and publishing. So, if a yarnd user types ¼, it could get changed to ¼ in the twtxt.txt file for everyone to see, not just people reading through yarnd. But when I type ¼, meaning first out of four, as a non-yarnd user, the meaning wouldn’t get corrupted. I can always type ¼ directly if that’s what I really intend.

(This twt might be easier to understand if you read it without any transformations :-P)

Anyway, again, I’m not a yarnd user, so do what you will, just know you might not be seeing exactly what I meant.

@prologic@twtxt.net I wrote ¼ (one slash four) by which I meant “the first out of four”. twtxt.net is showing it as ¼, a single character that IMO doesn’t have that same meaning (it means 0.25). Similarly, ¾ got replaced with ¾ in another twt. It’s not a big deal. It just looks a little wrong, especially beside the 2/4 and 4/4 in my other two twts.

Simplified twtxt - I want to suggest some dogmas or commandments for twtxt, from where we can work our way back to how to implement different feature like replies/treads:

It’s a text file, so you must be able to write it by hand (ie. no app logic) and read by eye. If you edit a post you change the content not the timestamp. Otherwise it will be considered a new post.

The order of lines in a twtxt.txt must not hold any significant. The file is a container and each line an atomic piece of information. You should be able to run

sorton a twtxt.txt and it should still work.Transport protocol should not matter, as long as the file served is the same. Http and https are preferred, so it is suggested that feed served via Gopher or Gemini also provide http(s).

Do we need more commandments?

hop, entraînement terminé, j’ai fait le plein d’énergie avant d’aller donner un sang de qualitté ^^ #EFS #dondusang. Le niveau 7 de la méthode #lafay est par contre trop longue, je n’ai pas assez de temps pour faire ça bien. Tant pis dans ce cas, retour à la n°6 et j’y ajouterai 1 exercice jusqu’à épuisement tiré au sort. Ou alors je ressort le #TRX, il faut qu eje trouve où l’accrocher. #sport #training

gg=G and to va", ci", di{... in vim the other day 😆 Life will never be the same, I can feel it. ref

@aelaraji@aelaraji.com one i use quite frequently is when i have a list of items (1 per line) and want them sorted but only keep those which are unique: ggV}:sort u

Installing Devuan 3.1 and Migrating to Ceres | https://starbreaker.org/blog/tech/installing-devuan-31-migrating-ceres/index.html

I share I did write up an algorithm for it at some point I think it is lost in a git comment someplace. I’ll put together a pseudo/go code this week.

Super simple:

Making a reply:

- If yarn has one use that. (Maybe do collision check?)

- Make hash of twt raw no truncation.

- Check local cache for shortest without collision

- in SQL:

select len(subject) where head_full_hash like subject || '%'

- in SQL:

Threading:

- Get full hash of head twt

- Search for twts

- in SQL:

head_full_hash like subject || '%' and created_on > head_timestamp

- in SQL:

The assumption being replies will be for the most recent head. If replying to an older one it will use a longer hash.

If we stuck with Blake2b for Twt Hash(es); what do we think we need to reasonably go to in bit length/size?

=> https://gist.mills.io/prologic/194993e7db04498fa0e8d00a528f7be6

e.g: (turns out @xuu@txt.sour.is is right about Blak2b being easy/simple too!):

$ printf "%s\t%s\t%s" "https://example.com/twtxt.txt" "2024-09-29T13:30:00Z" "Hello World!" | b2sum -l 32 -t | awk '{ print $1 }'

7b8b79dd

@prologic@twtxt.net Regarding the new way of generating twt-hashes, to me it makes more sense to use tabs as separator instead of spaces, since the you can just copy/past a line directly from a twtxt-file that already go a tab between timestamp and message. But tabs might be hard to “type” when you are in a terminal, since it will activate autocomplete…🤔

Another thing, it seems that you sugget we only use the domain in the hash-creation and not the full path to the twtxt.txt

$ echo -e "https://example.com 2024-09-29T13:30:00Z Hello World!" | sha256sum - | awk '{ print $1 }' | base64 | head -c 12

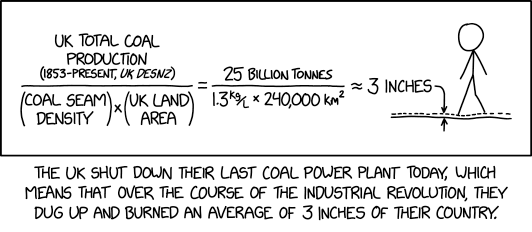

UK Coal

⌘ Read more

⌘ Read more

+1 👆

Gentlemen, I have a pdf file (1.5MB) which I want to be able to block and copy text writing out of it, but it’s locked, preventing this. All I used to do was write it out by hand, or screen shot the text as an image.

Is there any software that opens pdf format for copying and pasting of the text?

More thoughts about changes to twtxt (as if we haven’t had enough thoughts):

- There are lots of great ideas here! Is there a benefit to putting them all into one document? Seems to me this could more easily be a bunch of separate efforts that can progress at their own pace:

1a. Better and longer hashes.

1b. New possibly-controversial ideas like edit: and delete: and location-based references as an alternative to hashes.

1c. Best practices, e.g. Content-Type: text/plain; charset=utf-8

1d. Stuff already described at dev.twtxt.net that doesn’t need any changes.

We won’t know what will and won’t work until we try them. So I’m inclined to think of this as a bunch of draft ideas. Maybe later when we’ve seen it play out it could make sense to define a group of recommended twtxt extensions and give them a name.

Another reason for 1 (above) is: I like the current situation where all you need to get started is these two short and simple documents:

https://twtxt.readthedocs.io/en/latest/user/twtxtfile.html

https://twtxt.readthedocs.io/en/latest/user/discoverability.html

and everything else is an extension for anyone interested. (Deprecating non-UTC times seems reasonable to me, though.) Having a big long “twtxt v2” document seems less inviting to people looking for something simple. (@prologic@twtxt.net you mentioned an anonymous comment “you’ve ruined twtxt” and while I don’t completely agree with that commenter’s sentiment, I would feel like twtxt had lost something if it moved away from having a super-simple core.)All that being said, these are just my opinions, and I’m not doing the work of writing software or drafting proposals. Maybe I will at some point, but until then, if you’re actually implementing things, you’re in charge of what you decide to make, and I’m grateful for the work.

Probando mi internet.. 3,2,1… #Barcelona

@prologic@twtxt.net does that include mine? otherwise it would make them 8 and 5, maybe even throw off your maths by 0.00001% 😆 … and, come on! 1.04% seems like a good ratio considering how many gopher holes and gem capsules compared to how many Web servers out there in the world 😂

Gemini/Gopher Twtxt feeds account for less than 1% in existence:

$ total=$(inspect-db yarns.db | jq -r '.Value.URL' | awk -F'//' '{if ($1 ~ /^https?/) print "http/https:"; else print $1}' | sort | uniq -c | awk '{sum+=$1} END {print sum}'); inspect-db yarns.db | jq -r '.Value.URL' | awk -F'//' '{if ($1 ~ /^https?/) print "http/https:"; else print $1}' | sort | uniq -c | awk -v total="$total" '{printf "%d %s %.2f%%\n", $1, $2, ($1/total)*100}' | sort -r

7 gemini: 0.66%

4 gopher: 0.38%

1046 http/https: 98.96%

Good writeup, @anth@a.9srv.net! I agree to most of your points.

3.2 Timestamps: I feel no need to mandate UTC. Timezones are fine with me. But I could also live with this new restriction. I fail to see, though, how this change would make things any easier compared to the original format.

3.4 Multi-Line Twts: What exactly do you think are bad things with multi-lines?

4.1 Hash Generation: I do like the idea with with a new uuid metadata field! Any thoughts on two feeds selecting the same UUID for whatever reason? Well, the same could happen today with url.

5.1 Reply to last & 5.2 More work to backtrack: I do not understand anything you’re saying. Can you rephrase that?

8.1 Metadata should be collected up front: I generally agree, but if the uuid metadata field were a feed URL and no real UUID, there should be probably an exception to change the feed URL mid-file after relocation.

@sorenpeter@darch.dk not even this: https://twtxt.net/media/AzUmzTN5YEJdt4VPeeprjB.png?full=1

Some more arguments for a local-based treading model over a content-based one:

The format:

(#<DATE URL>)or(@<DATE URL>)both makes sense: # as prefix is for a hashtag like we allredy got with the(#twthash)and @ as prefix denotes that this is mention of a specific post in a feed, and not just the feed in general. Using either can make implementation easier, since most clients already got this kind of filtering.Having something like

(#<DATE URL>)will also make mentions via webmetions for twtxt easier to implement, since there is no need for looking up the#twthash. This will also make it possible to make 3th part twt-mentions services.Supporting twt/webmentions will also increase discoverability as a way to know about both replies and feed mentions from feeds that you don’t follow.

rsync(1) but, whenever I Tab for completion and get this:

@aelaraji@aelaraji.com Rsync has a ton of options and I probably still haven’t scratched the surface, but I was able to memorize the options I actually need for day-to-day work in a relatively short time. I guess I’m the opposite of you, because I don’t know any scp(1) options.

Been trying to get acquainted with rsync(1) but, whenever I Tab for completion and get this:

λ ~/ rsync –

zsh: do you wish to see all 484 possibilities (162 lines)?

I’m like: Nope! a scp -rpCq ... or whatever option salad will do just fine. 😅 [Insert: “Ain’t nobody got time fo’that!” Meme.]

LMAO 🤣 … I’ve been scrolling through mutt(1) man page and found this:

BUGS

None. Mutts have fleas, not bugs.

@prologic@twtxt.net Thanks for writing that up!

I hope it can remain a living document (or sequence of draft revisions) for a good long time while we figure out how this stuff works in practice.

I am not sure how I feel about all this being done at once, vs. letting conventions arise.

For example, even today I could reply to twt abc1234 with “(#abc1234) Edit: …” and I think all you humans would understand it as an edit to (#abc1234). Maybe eventually it would become a common enough convention that clients would start to support it explicitly.

Similarly we could just start using 11-digit hashes. We should iron out whether it’s sha256 or whatever but there’s no need get all the other stuff right at the same time.

I have similar thoughts about how some users could try out location-based replies in a backward-compatible way (append the replyto: stuff after the legacy (#hash) style).

However I recognize that I’m not the one implementing this stuff, and it’s less work to just have everything determined up front.

Misc comments (I haven’t read the whole thing):

Did you mean to make hashes hexadecimal? You lose 11 bits that way compared to base32. I’d suggest gaining 11 bits with base64 instead.

“Clients MUST preserve the original hash” — do you mean they MUST preserve the original twt?

Thanks for phrasing the bit about deletions so neutrally.

I don’t like the MUST in “Clients MUST follow the chain of reply-to references…”. If someone writes a client as a 40-line shell script that requires the user to piece together the threading themselves, IMO we shouldn’t declare the client non-conforming just because they didn’t get to all the bells and whistles.

Similarly I don’t like the MUST for user agents. For one thing, you might want to fetch a feed without revealing your identty. Also, it raises the bar for a minimal implementation (I’m again thinking again of the 40-line shell script).

For “who follows” lists: why must the long, random tokens be only valid for a limited time? Do you have a scenario in mind where they could leak?

Why can’t feeds be served over HTTP/1.0? Again, thinking about simple software. I recently tried implementing HTTP/1.1 and it wasn’t too bad, but 1.0 would have been slightly simpler.

Why get into the nitty-gritty about caching headers? This seems like generic advice for HTTP servers and clients.

I’m a little sad about other protocols being not recommended.

I don’t know how I feel about including markdown. I don’t mind too much that yarn users emit twts full of markdown, but I’m more of a plain text kind of person. Also it adds to the length. I wonder if putting a separate document would make more sense; that would also help with the length.

Had to build a list of all feeds (that I follow) and all twts in them and there are two collisions already:

$ ./stats

Saw 58263 hashes

7fqcxaa

https://twtxt.net/user/justamoment/twtxt.txt

https://twtxt.net/user/prologic/twtxt.txt

ntnakqa

https://twtxt.net/user/prologic/twtxt.txt

https://twtxt.net/user/thecanine/twtxt.txt

Namely:

$ jenny -D https://twtxt.net/user/justamoment/twtxt.txt | grep 7fqcxaa

[7fqcxaa] [2022-12-28 04:53:30+00:00] [(#pmuqoca) @prologic@twtxt.net I checked the GitHub discussion, it became a request to join forces.

Do you plan on having them join?

Also for the name, how about:

- “progit” or “prologit” (prologic official hard fork)

- “git-stance” (git instance)

- “GitTree” (Gitea inspired, maybe to related)

- “Gitomata” (git automata)

- “Git.Source”

- “Forgor” (forgit is taken so I forgor) 🤣

- “SweetGit” (as salty chat)

- “Pepper Git” (other ingredients) 😉

- “GitHeart” (core of git with a GitHub sounding name)

- “GitTaka” (With music in mind)

Ok, enough fun… Hope this helps sprout some ideas from others if nothing is to your taste.]

$ jenny -D https://twtxt.net/user/prologic/twtxt.txt/5 | grep 7fqcxaa

[7fqcxaa] [2022-02-25 21:14:45+00:00] [(#bqq6fxq) It’s handled by blue Monday]

And:

$ jenny -D https://twtxt.net/user/thecanine/twtxt.txt | grep ntnakqa

[ntnakqa] [2022-01-23 10:24:09+00:00] [(#2wh7r4q) <a href="https://yarn.girlonthemoon.xyz/external?uri=https://twtxt.net/user/prologic/twtxt.txt">@prologic<em>@twtxt.net</em></a> I know, I was just hoping it might have also gotten fixed by that change, by some kind of backend miracles. 😂]

$ jenny -D https://twtxt.net/user/prologic/twtxt.txt/1 | grep ntnakqa

[ntnakqa] [2024-02-27 05:51:50+00:00] [(#otuupfq) <a href="https://yarn.girlonthemoon.xyz/external?uri=https://twtxt.net/user/shreyan/twtxt.txt">@shreyan<em>@twtxt.net</em></a> Ahh 👌]

Alright, before I go and watch Formula 1 😅, I made two PRs regarding the two “competing” ideas:

- https://git.mills.io/yarnsocial/yarn/pulls/1179 –

(replyto:…)

- https://git.mills.io/yarnsocial/yarn/pulls/1180 –

(edit:…)and(delete:…)

As a first step, this summarizes my current understanding. Please comment! 😊

@aelaraji@aelaraji.com This is one of the reasons why yarnd has a couple of settings with some sensible/sane defaults:

I could already imagine a couple of extreme cases where, somewhere, in this peaceful world one’s exercise of freedom of speech could get them in Real trouble (if not danger) if found out, it wouldn’t necessarily have to involve something to do with Law or legal authorities. So, If someone asks, and maybe fearing fearing for… let’s just say ‘Their well being’, would it heart if a pod just purged their content if it’s serving it publicly (maybe relay the info to other pods) and call it a day? It doesn’t have to be about some law/convention somewhere … 🤷 I know! Too extreme, but I’ve seen news of people who’d gone to jail or got their lives ruined for as little as a silly joke. And it doesn’t even have to be about any of this.

There are two settings:

$ ./yarnd --help 2>&1 | grep max-cache

--max-cache-fetchers int set maximum numnber of fetchers to use for feed cache updates (default 10)

-I, --max-cache-items int maximum cache items (per feed source) of cached twts in memory (default 150)

-C, --max-cache-ttl duration maximum cache ttl (time-to-live) of cached twts in memory (default 336h0m0s)

So yarnd pods by default are designed to only keep Twts around publicly visible on either the anonymous Frontpage or Discover View or your Timeline or the feed’s Timeline for up to 2 weeks with a maximum of 150 items, whichever get exceeded first. Any Twts over this are considered “old” and drop off the active cache.

It’s a feature that my old man @off_grid_living@twtxt.net was very strongly in support of, as was I back in the day of yarnd’s design (nothing particularly to do with Twtxt per se) that I’ve to this day stuck by – Even though there are some 😉 that have different views on this 🤣

Apple A16 SoC Now Manufactured In Arizona

“Apple has begun manufacturing its A16 SoC at the newly-opened TSCM Fab 21 in Arizona,” writes Slashdot reader NoMoreACs. AppleInsider reports: According to sources of Tim Culpan, Phase 1 of TSMC’s Fab 21 in Arizona is making the A16 SoC of the iPhone 14 Pro in “small, but significant, numbers. The production is largely a test for the facility at this stage, but more production is expected … ⌘ Read more

There’s a simple reason all the current hashes end in a or q: the hash is 256 bits, the base32 encoding chops that into groups of 5 bits, and 256 isn’t divisible by 5. The last character of the base32 encoding just has that left-over single bit (256 mod 5 = 1).

So I agree with #3 below, but do you have a source for #1, #2 or #4? I would expect any lack of variability in any part of a hash function’s output would make it more vulnerable to attacks, so designers of hash functions would want to make the whole output vary as much as possible.

Other than the divisible-by-5 thing, my current intuition is it doesn’t matter what part you take.

Hash Structure: Hashes are typically designed so that their outputs have specific statistical properties. The first few characters often have more entropy or variability, meaning they are less likely to have patterns. The last characters may not maintain this randomness, especially if the encoding method has a tendency to produce less varied endings.

Collision Resistance: When using hashes, the goal is to minimize the risk of collisions (different inputs producing the same output). By using the first few characters, you leverage the full distribution of the hash. The last characters may not distribute in the same way, potentially increasing the likelihood of collisions.

Encoding Characteristics: Base32 encoding has a specific structure and padding that might influence the last characters more than the first. If the data being hashed is similar, the last characters may be more similar across different hashes.

Use Cases: In many applications (like generating unique identifiers), the beginning of the hash is often the most informative and varied. Relying on the end might reduce the uniqueness of generated identifiers, especially if a prefix has a specific context or meaning.

Taking the last n characters of a base32 encoded hash instead of the first n can be problematic for several reasons:

Hash Structure: Hashes are typically designed so that their outputs have specific statistical properties. The first few characters often have more entropy or variability, meaning they are less likely to have patterns. The last characters may not maintain this randomness, especially if the encoding method has a tendency to produce less varied endings.

Collision Resistance: When using hashes, the goal is to minimize the risk of collisions (different inputs producing the same output). By using the first few characters, you leverage the full distribution of the hash. The last characters may not distribute in the same way, potentially increasing the likelihood of collisions.

Encoding Characteristics: Base32 encoding has a specific structure and padding that might influence the last characters more than the first. If the data being hashed is similar, the last characters may be more similar across different hashes.

Use Cases: In many applications (like generating unique identifiers), the beginning of the hash is often the most informative and varied. Relying on the end might reduce the uniqueness of generated identifiers, especially if a prefix has a specific context or meaning.

In summary, using the first n characters generally preserves the intended randomness and collision resistance of the hash, making it a safer choice in most cases.

@quark@ferengi.one Do you mean something like this?

$ ./yarnc debug ~/Public/twtxt.txt | tail -n 1

kp4zitq 2024-09-08T02:08:45Z (#wsdbfna) @<aelaraji https://aelaraji.com/twtxt.txt> My work has this thing called "compressed work", where you can **buy** extra time off (_as much as 4 additional weeks_) per year. It comes out of your pay though, so it's not exactly a 4-day work week but it could be useful, just haven't tired it yet as I'm not entirely sure how it'll affect my net pay

@prologic@twtxt.net I saw those, yes. I tried using yarnc, and it would work for a simple twtxt. Now, for a more convoluted one it truly becomes a nightmare using that tool for the job. I know there are talks about changing this hash, so this might be a moot point right now, but it would be nice to have a tool that:

- Would calculate the hash of a twtxt in a file.

- Would calculate all hashes on a

twtxt.txt(local and remote).

Again, something lovely to have after any looming changes occur.

@bender@twtxt.net LOL normally things (in the vanilla template) render like <time class="dt-published" datetime="2024-09-17T15:05:19+01:00"> 2024-09-17 14:05:19 +0000 UTC+0000 </time> the datetime=... atribute is in my local time UTC+1 then the text within the tag is in UTC+0

The thing is, I’ve been poking at the template as well, but nothing changes. I literally whole portionsm added in lorem text just to see if it would do anything, then twtxt2html -T ./layout.html <link to twtxt file> | less shows same thing as before! nothing changes. LOL I’m not sure I’m going at it the right way.

@movq@www.uninformativ.de I didn’t run the command as you recommended, but, I wiped things once more, and ran jenny -f, and this time got:

david@arrakis:~$ jenny -f

Fetching archived feed https://anthony.buc.ci/user/abucci/twtxt.txt/1 (configured as abucci, https://anthony.buc.ci/user/abucci/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2024-04.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://darch.dk/twtxt-archive.txt (configured as soren, https://darch.dk/twtxt.txt)

Fetching archived feed https://www.uninformativ.de/twtxt-old_2024-04-21_6v47cua.txt (configured as movq, https://www.uninformativ.de/twtxt.txt)

Fetching archived feed https://twtxt.net/user/prologic/twtxt.txt/1 (configured as prologic, https://twtxt.net/user/prologic/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2024-03.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://www.uninformativ.de/twtxt-old_2022-12-21_2us6qbq.txt (configured as movq, https://www.uninformativ.de/twtxt.txt)

Fetching archived feed https://twtxt.net/user/prologic/twtxt.txt/2 (configured as prologic, https://twtxt.net/user/prologic/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2024-02.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://www.uninformativ.de/twtxt-old_2022-01-14_ew5gzca.txt (configured as movq, https://www.uninformativ.de/twtxt.txt)

Fetching archived feed https://twtxt.net/user/prologic/twtxt.txt/3 (configured as prologic, https://twtxt.net/user/prologic/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2024-01.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://www.uninformativ.de/twtxt-old_2021-12-23_f6y65bq.txt (configured as movq, https://www.uninformativ.de/twtxt.txt)

Fetching archived feed https://twtxt.net/user/prologic/twtxt.txt/4 (configured as prologic, https://twtxt.net/user/prologic/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2023-12.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://www.uninformativ.de/twtxt-old_2021-12-04_e4x7yba.txt (configured as movq, https://www.uninformativ.de/twtxt.txt)

Fetching archived feed https://twtxt.net/user/prologic/twtxt.txt/5 (configured as prologic, https://twtxt.net/user/prologic/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2023-11.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://www.uninformativ.de/twtxt-old_2021-11-18_42tjxba.txt (configured as movq, https://www.uninformativ.de/twtxt.txt)

Fetching archived feed https://twtxt.net/user/prologic/twtxt.txt/6 (configured as prologic, https://twtxt.net/user/prologic/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2023-10.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://www.uninformativ.de/twtxt-old_2021-11-08_i2wnvaa.txt (configured as movq, https://www.uninformativ.de/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2023-09.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://www.uninformativ.de/twtxt-old_2021-10-23_kvwn5oa.txt (configured as movq, https://www.uninformativ.de/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2023-08.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://www.uninformativ.de/twtxt-old_2021-10-11_mljudaa.txt (configured as movq, https://www.uninformativ.de/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2023-07.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://www.uninformativ.de/twtxt-old_2021-09-22_5mkqwua.txt (configured as movq, https://www.uninformativ.de/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2023-06.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://www.uninformativ.de/twtxt-old_2021-07-27_xcnzmlq.txt (configured as movq, https://www.uninformativ.de/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2023-05.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://www.uninformativ.de/twtxt-old_2021-06-16_mtedqya.txt (configured as movq, https://www.uninformativ.de/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2023-04.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://www.uninformativ.de/twtxt-old_2021-04-29_z7lvzja.txt (configured as movq, https://www.uninformativ.de/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2023-03.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://www.uninformativ.de/twtxt-old_2021-03-19_xjabvhq.txt (configured as movq, https://www.uninformativ.de/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2023-02.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://www.uninformativ.de/twtxt-old_2021-02-24_te4a6oa.txt (configured as movq, https://www.uninformativ.de/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2023-01.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://www.uninformativ.de/twtxt-old_2021-01-26_qxgigma.txt (configured as movq, https://www.uninformativ.de/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2022-12.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://www.uninformativ.de/twtxt-old_2020-12-13_igfnala.txt (configured as movq, https://www.uninformativ.de/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2022-11.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2022-10.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2022-09.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2022-08.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2022-07.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2022-06.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2022-05.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2022-04.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2022-03.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2022-02.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2022-01.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2021-12.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2021-11.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2021-10.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2021-09.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2021-08.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2021-07.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2021-06.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2021-05.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2021-04.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2021-03.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2021-02.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2021-01.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Fetching archived feed https://lyse.isobeef.org/twtxt-2020-12.txt (configured as lyse, https://lyse.isobeef.org/twtxt.txt)

Notice that @prologic@twtxt.net’s /6 is there. I found the twtxt then. Kind of odd it didn’t show before.

@aelaraji@aelaraji.com I just added support for passing a custom template file via -T/--template in case you need a custom template 👌

prologic@JamessMacStudio

Wed Sep 18 01:27:29

~/Projects/yarnsocial/twtxt2html

(main) 130

$ ./twtxt2html --help

Usage: twtxt2html [options] FILE|URL

twtxt2html converts a twtxt feed to a static HTML page

-d, --debug enable debug logging

-l, --limit int limit number ot twts (default all) (default -1)

-n, --noreldate do now show twt relative dates

-r, --reverse reverse the order of twts (oldest first)

-T, --template string path to template file

-t, --title string title of generated page (default "Twtxt Feed")

-v, --version display version information

pflag: help requested

More:

Subject: The [tag URI scheme](https://en.wikipedia.org/wiki/Tag_URI_scheme) looks interesting. I like that it human read- and writable. And since we already got the timestamp in the twtxt.txt it would be

somewhat trivial to parse. But there are still the issue with what the name/id should be... Maybe it doesn't have to bee that stick? Instead of using `tag:` as the prefix/protocol, it would more it clear

what we are talking about by using `in-reply-to:` (https://indieweb.org/in-reply-to) or `replyto:` similar to `mailto:` 1. `(reply:sorenpeter@darch.dk,2024-09-15T12:06:27Z)' 2.

`(in-reply-to:darch.dk/twtxt.txt,2024-09-15T12:06:27Z)' 2. `(replyto:http://darch.dk/twtxt.txt,2024-09-15T12:06:27Z)' I know it's longer that 7-11 characters, but it's self-explaining when looking at the

twtxt.txt in the raw, and the cases above can all be caught with this regex: `\([\w-]*reply[\w-]*\:` Is this something that would work?

Subject: The [tag URI scheme](https://en.wikipedia.org/wiki/Tag_URI_scheme) looks interesting. I like that it human read- and writable. And since we already got the timestamp in the twtxt.txt it would be

somewhat trivial to parse. But there are still the issue with what the name/id should be... Maybe it doesn't have to bee that stick? Instead of using `tag:` as the prefix/protocol, it would more it clear

what we are talking about by using `in-reply-to:` (https://indieweb.org/in-reply-to) or `replyto:` similar to `mailto:` 1. `(reply:sorenpeter@darch.dk,2024-09-15T12:06:27Z)` 2.

`(in-reply-to:darch.dk/twtxt.txt,2024-09-15T12:06:27Z)` 3. `(replyto:http://darch.dk/twtxt.txt,2024-09-15T12:06:27Z)` I know it's longer that 7-11 characters, but it's self-explaining when looking at the

twtxt.txt in the raw, and the cases above can all be caught with this regex: `\([\w-]*reply[\w-]*\:` Is this something that would work?

Notice the difference? Soren edited, and broke everything.

@mckinley@twtxt.net Thanks for the feedback.

- Yeah I agrees that nick sound not be part of syntax. Any valid URL to a twtxt.txt-file should be enough and is more clear, so it is not confused with a email (one of the the issues with webfinger and fedivese handles)

- I think any valid URL would work, since we are not bound to look for exact matches. Accepting both http and https as well as a gemni and gophe could all work as long as the path to the twtxt.txt is the same.

- My idea is that you quote the timestamp as it is in the original twtxt.txt that you are referring to, so you can do it by simply copy/pasting. Also what are the change that the same human will make two different posts within the same second?!

Regarding the whole cryptographic keys for identity, to me it seems like an unnecessary layer of complexity. If you move to a new house or city you tell people that you moved - you can do the same in a twtxt.txt. Just post something like “I move to this new URL, please follow me there!” I did that with my feeds at least twice, and you guys still seem to read my posts:)

The tag URI scheme looks interesting. I like that it human read- and writable. And since we already got the timestamp in the twtxt.txt it would be somewhat trivial to parse. But there are still the issue with what the name/id should be… Maybe it doesn’t have to bee that stick?

Instead of using tag: as the prefix/protocol, it would more it clear what we are talking about by using in-reply-to: (https://indieweb.org/in-reply-to) or replyto: similar to mailto:

(reply:sorenpeter@darch.dk,2024-09-15T12:06:27Z)

(in-reply-to:darch.dk/twtxt.txt,2024-09-15T12:06:27Z)

(replyto:http://darch.dk/twtxt.txt,2024-09-15T12:06:27Z)

I know it’s longer that 7-11 characters, but it’s self-explaining when looking at the twtxt.txt in the raw, and the cases above can all be caught with this regex: \([\w-]*reply[\w-]*\:

Is this something that would work?

Weird, I can’t set up my iwm0 interface to rdomain 1 : ifconfig: SIOCSIFRDOMAIN: Invalid argument. What am I missing? #openbsd

url field in the feed to define the URL for hashing. It should have been the last encountered one. Then, assuming append-style feeds, you could override the old URL with a new one from a certain point on:

I was not suggesting to that everyone need to setup a working webfinger endpoint, but that we take the format of nick+(sub)domain as base for generating the hashed together with the message date and content.

If we omit the protocol prefix from the way we do things now will that not solve most of the problems? In the case of gemini://gemini.ctrl-c.club/~nristen/twtxt.txt they also have a working twtxt.txt at https://ctrl-c.club/~nristen/twtxt.txt … damn I just notice the gemini. subdomain.

Okay what about defining a prefers protocol as part of the hash schema? so 1: https , 2: http 3: gemini 4: gopher ?

# follow_notify = gemini://foo/bar to your feed’s metadata, so that clients who follow you can ping that URL every now and then? How would you even notice that, do you regularly read your gemini logs? 🤔

@movq@www.uninformativ.de @prologic@twtxt.net Hey! I may have found a silly trick to announce my following to people hosting their feeds on the Gemini space using the requested URI itself instead of relaying on the USER Agent 😂. I’ve copied my current feed over to my (to be) Gemlog for testing. And if I do a jenny -D "gemini://gem.aelaraji.com/twtxt.txt?follower=aelaraji@https://aelaraji.com/twtxt.txt" and this happens:

A) As a follower, I get the feed as usual.

B) As the feed owner, I get this in logs:

hostname:1965 - “gemini://gem.aelaraji.com/twtxt.txt?follower=aelaraji@https://aelaraji.com/twtxt.txt” 20 “text/plain;lang=en-US”

You could do the same for Gopher feeds but only if you want to announce yourself by throwing in an error in their logs, then you’ll need a second request to fetch the feed. jenny -D "gopher://gopher.aelaraji.com/twtxt.txt&follower=aelaraji@https:/aelaraji.com/twtxt.txt" gave me this :

gopher.aelaraji.com:70 - [09/Sep/2024:22:08:54 +0000] “GET 0/twtxt.txt&follower=aelaraji@https:/aelaraji.com/twtxt.txt HTTP/1.0” 404 0 “” “Unknown gopher client”

NB: the follower=... string won’t appear in gopher logs after a ? but if I replace it with a + or a & and it works. There will be a missing / after the https:. Probably a client thing.

@falsifian@www.falsifian.org In my opinion it was a mistake that we defined the first url field in the feed to define the URL for hashing. It should have been the last encountered one. Then, assuming append-style feeds, you could override the old URL with a new one from a certain point on:

# url = https://example.com/alias/txtxt.txt

# url = https://example.com/initial/twtxt.txt

<message 1 uses the initial URL>

<message 2 uses the initial URL, too>

# url = https://example.com/new/twtxt.txt

<message 3 uses the new URL>

# url = https://example.com/brand-new/twtxt.txt

<message 4 uses the brand new URL>

In theory, the same could be done for prepend-style feeds. They do exist, I’ve come around them. The parser would just have to calculate the hashes afterwards and not immediately.

@prologic@twtxt.net Some criticisms and a possible alternative direction:

Key rotation. I’m not a security person, but my understanding is that it’s good to be able to give keys an expiry date and replace them with new ones periodically.

It makes maintaining a feed more complicated. Now instead of just needing to put a file on a web server (and scan the logs for user agents) I also need to do this. What brought me to twtxt was its radical simplicity.

Instead, maybe we should think about a way to allow old urls to be rotated out? Like, my metadata could somehow say that X used to be my primary URL, but going forward from date D onward my primary url is Y. (Or, if you really want to use public key cryptography, maybe something similar could be used for key rotation there.)

It’s nice that your scheme would add a way to verify the twts you download, but https is supposed to do that anyway. If you don’t trust https to do that (maybe you don’t like relying on root CAs?) then maybe your preferred solution should be reflected by your primary feed url. E.g. if you prefer the security offered by IPFS, then maybe an IPNS url would do the trick. The fact that feed locations are URLs gives some flexibility. (But then rotation is still an issue, if I understand ipns right.)

On the Subject of Feed Identities; I propose the following:

- Generate a Private/Public ED25519 key pair

- Use this key pair to sign your Twtxt feed

- Use it as your feed’s identity in place of

# url =as# key = ...

For example:

$ ssh-keygen -f prologic@twtxt.net

$ ssh-keygen -Y sign -n prologic@twtxt.net -f prologic@twtxt.net twtxt.txt

And your feed would looke like:

# nick = prologic

# key = SHA256:23OiSfuPC4zT0lVh1Y+XKh+KjP59brhZfxFHIYZkbZs

# sig = twtxt.txt.sig

# prev = j6bmlgq twtxt.txt/1

# avatar = https://twtxt.net/user/prologic/avatar#gdoicerjkh3nynyxnxawwwkearr4qllkoevtwb3req4hojx5z43q

# description = "Problems are Solved by Method" 🇦🇺👨💻👨🦯🏹♔ 🏓⚯ 👨👩👧👧🛥 -- James Mills (operator of twtxt.net / creator of Yarn.social 🧶)

2024-06-14T18:22:17Z (#nef6byq) @<bender https://twtxt.net/user/bender/twtxt.txt> Hehe thanks! 😅 Still gotta sort out some other bugs, but that's tomorrows job 🤞

...

Twt Hash extension would change of course to use a feed’s ED25519 public key fingerprint.