cli test 3

3°C today, it was quite nice in the sun. A lot of hunting and tree felling going on in the forest. And we met the heron again, that was very cool: https://lyse.isobeef.org/waldspaziergang-2024-12-28/

And now some stupid fuckwits are burning firecrackers again. Very annoying. Can we please ban this shit once and forever!?

Oh no!

Wife and I agreed on hibernate until January, just visiting relatives but avoiding any kind of shopping. I tried buying something like 2 or 3 days ago and it’s insane :o

Good luck! :)

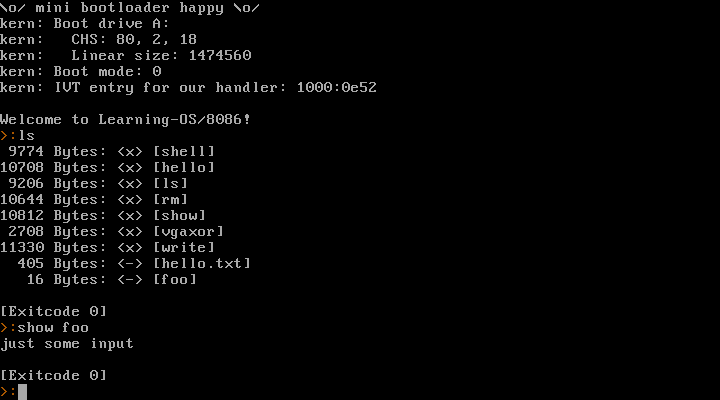

I’ve been making a little toy operating system for the 8086 in the last few days. Now that was a lot of fun!

I don’t plan on making that code public. This is purely a learning project for myself. I think going for real-mode 8086 + BIOS is a good idea as a first step. I am well aware that this isn’t going anywhere – but now I’ve gained some experience and learned a ton of stuff, so maybe 32 bit or even 64 bit mode might be doable in the future? We’ll see.

It provides a syscall interface, can launch processes, read/write files (in a very simple filesystem).

Here’s a video where I run it natively on my old Dell Inspiron 6400 laptop (and Warp 3 later in the video, because why not):

https://movq.de/v/893daaa548/los86-p133-warp3.mp4

(Sorry for the skewed video. It’s a glossy display and super hard to film this.)

It starts with the laptop’s boot menu and then boots into the kernel and launches a shell as PID 1. From there, I can launch other processes (anything I enter is a new process, except for the exit at the end) and they return the shell afterwards.

And a screenshot running in QEMU:

Glad you like them, @aelaraji@aelaraji.com. Anytime!

Recovery run: 3.11 miles, 00:11:27 average pace, 00:35:33 duration

@movq@www.uninformativ.de I know, nobody asked 🤡 but, here are a couple of suggestions:

- If you’re willing to pay for a licence I’d highly recommend plasticity it’s under

GNU LESSER GENERAL PUBLIC LICENSE, Version 3.

- Otherwise if you already have experience with CAD/Parametric modeling you could give freeCAD a spin, it’s under

GNU Library General Public License, version 2.0, it took them years but have just recently shipped their v1.0 👍

- or just roll with Autodesk’s Fusion for personal use, if you don’t mind their “Oh! You need to be online to use it” thing.

(Let’s face it, Blender is hard to use.)

I bet you’re talking about blender 2.79 and older! 😂 you are, right? JK

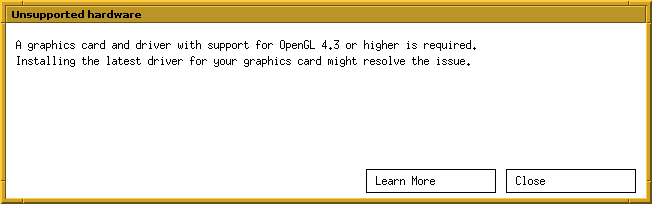

Goodbye Blender, I guess? 🤔

A bit annoying, but not much of a problem. The only thing I did with Blender was make some very simple 3D-printable objects.

I’ll have a look at the alternatives out there. Worst case is I go back to Art of Illusion, which I used heavily ~15 years ago.

Thank you, @movq@www.uninformativ.de! Luckily, I can disable it. I also tried it, no luck, though. But the problem is, I don’t really know how much snakeoil actually runs on my machine. There is definitely a ClownStrike infestation, I stopped the falcon sensor. But there might be even more, I’ve no idea. From the vague answers I got last time, it feels like even the UHD/IT guys don’t know what is in use. O_o

Yeah, it is definitely something on my laptop that rejects connections to IPv4 ports 80 and 443. All other devices here can access the stuff without issue, only this work machine is unable to. The “Connection refused” happens within a few milliseconds.

Unfortunately, I do not have the slightest idea how it works. But maybe I can look into that tomorrow. Kernel modules are a very good hint, thank you! <3

You’re right, it might be some sort of fail-safe mechanism. But then, why just block IPv4 and not also IPv6? But maybe because the VPN and company servers require IPv4, there is zero IPv6 support. (Yeah, don’t ask, I don’t understand it either.)

I’m on vacation now. First order of business: Sit in the armchair for “a few minutes” (= sleep tight for 3 hours straight). 😴

Arctic could see first ice-free day by 2027 + 3 more stories

Macron and Saudi Crown Prince to co-chair a conference for Palestinian state; UN investigates Venezuela’s election fraud; new UN aid chief prioritizes funding; study predicts ice-free Arctic by 2027 ⌘ Read more

@bender@twtxt.net That’s the plan! 3;)

Yes it work: 2024-12-01T19:38:35Z twtxt/1.2.3 (+https://eapl.mx/twtxt.txt; @eapl) :D

The .log is just a simple append each request. The idea with the .cvs is to have it tally up how many request there have been from each client as a way to avoid having the log file grow too big. And that you can open the .cvs as a spreadsheet and have an easy overview and filtering options.

Access to those files are closed to the public.

Pinellas County Running: 3.14 miles, 00:08:34 average pace, 00:26:55 duration

needed to get out. was going a bit crazy.

#running

@prologic@twtxt.net Oh! I’d like to see this one too. 3:)

SPRC Turkey Trot: 3.10 miles, 00:10:45 average pace, 00:33:16 duration

ran with my son on his scooter. definitely took lessons from the last two years and slowed it down so he did not cut off as many people and to avoid any wipe outs. besides him complaining for the first half that he was tired (around a bunch of people running) it was fun. got to open up a bit at the end since the crowds died down around then.

#running #race

@bender@twtxt.net Ta! <3

@bender@twtxt.net Nobody would notice if stopped auto-syncing my twtxt file 3:) and If I’m careful enough I’d have plenty of time to fix my mess

P.S:

~/remote/htwtxt » podman image list htwtxt the@wks

REPOSITORY TAG IMAGE ID CREATED SIZE

localhost/htwtxt 1.0.5-alpine 13610a37e347 3 hours ago 20.1 MB

localhost/htwtxt 1.0.7-alpine 2a5c560ee6b7 3 hours ago 20.1 MB

docker.io/buckket/htwtxt latest c0e33b2913c6 8 years ago 778 MB

Pacific aid falls due to Ukraine focus + 3 more stories

Aid to the Pacific falls 18% as focus shifts to Ukraine; Russia launches its first ICBM in the conflict; ICC issues arrest warrants for Netanyahu and Hamas leaders; Oropouche virus cases in Brazil surge amid new strain investigations. ⌘ Read more

Hehe, although it isn’t a fancy language PHP has improved a lot since the old PHP 5 days ¯_(ツ)_/¯ It’s 3 to 5 times slower than Go, so I think that’s not too bad

@bender@twtxt.net Haha! I assume you can’t see the original twt, let me quote for you so you know what I’m responding to:

2024-11-20T07:56:00-06:00 (#gjhq2xq) Hey! I tried running Timeline on my server with the default PHP version (8.3) and it’s giving me a few errors https://eapl.me/timeline/ I should be sending a PR soon to fix it ;)

source: eaplme’s twtxt file.

undefined array keys and creation of dynamic properties being deprecated (CC: @sorenpeter )

@bender@twtxt.net > which feed of his are you following? the .mx or the .me one? I was replaying to THIS TWT

Hey! I tried running Timeline on my server with the default PHP version (8.3) and it’s giving me a few errors https://eapl.me/timeline/ I should be sending a PR soon to fix it ;)

SPRF 5km: 3.14 miles, 00:08:47 average pace, 00:27:38 duration

race number two still feeling pretty good (minus a bit of a tweaked back) but kept a nice steady pace. not all out bu felt like a good threshold session.

#running #race

SPRF 10km: 6.28 miles, 00:07:55 average pace, 00:49:44 duration

played this by feel. such a cool day with a breeze making everything feel comfortable. at around 3 or 4 miles in i realized my pace was probably pretty quick and told myself i would just attempt to maintain. near mile five it started to hurt but just pushed through and got a pb!

#running #race

Easy run: 3.13 miles, 00:09:51 average pace, 00:30:54 duration

nice chill run. first day where my resting heart rate was back down to low 50s. no idea what was going on because i did not feel sick but maybe it was just all the stress from life and a crazy october?

#running

Major polluters absent from climate summit + 3 more stories

Scientists warn of the potential collapse of the Atlantic current; major polluters skip UN climate talks amidst climate finance discussions; ADB boosts climate finance to $7.2 billion with US and Japan support; Israel fails to meet US deadline for increased humanitarian aid to Gaza. ⌘ Read more

gracias a dios es atea <3

Thank you, @eapl.me@eapl.me! No need to apologize in the introduction, all good. :-)

Section 3: I’m a bit on the fence regarding documenting the HTTP caching headers. It’s a very general HTTP thing, so there is nothing special about them for twtxt. No need for the Twtxt Specification to actually redo it. But on the other hand, a short hint could certainly help client developers and feed authors. Maybe it’s thanks to my distro’s Ngninx maintainer, but I did not configure anything for the Last-Modified and ETag headers to be included in the response, the web server just already did it automatically.

The more that I think about it while typing this reply, the more I think your recommendation suggestion is actually really great. It will definitely beneficial for client developers. In almost all client implementation cases I’d say one has to actually do something specifically in the code to send the If-Modified-Since and/or If-None-Match request headers. There is no magic that will do it automatically, as one has to combine data from the last response with the new request.

But I also came across feeds that serve zero response headers that make caching possible at all. So, an explicit recommendation enables feed authors to check their server setups. Yeah, let’s absolutely do this! :-)

Regarding section 4 about feed discovery: Yeah, non-HTTP transport protocols are an issue as they do not have User-Agent headers. How exactly do you envision the discovery_url to work, though? I wouldn’t limit the transports to HTTP(S) in the Twtxt Specification, though. It’s up to the client to decide which protocols it wants to support.

Since I currently rely on buckket’s twtxt client to fetch the feeds, I can only follow http(s):// (and file://) feeds. But in tt2 I will certainly add some gopher:// and gemini:// at some point in time.

Some time ago, @movq@www.uninformativ.de found out that some Gopher/Gemini users prefer to just get an e-mail from people following them: https://twtxt.net/twt/dikni6q So, it might not even be something to be solved as there is no problem in the first place.

Section 5 on protocol support: You’re right, announcing the different transports in the url metadata would certainly help. :-)

Section 7 on emojis: Your idea of TUI/CLI avatars is really intriguing I have to say. Maybe I will pick this up in tt2 some day. :-)

the smell of fog is soo gud <3

Easy run: 3.13 miles, 00:09:14 average pace, 00:28:55 duration

hot, legs were tight, but felt alright i guess.

#running

@eapl.me@eapl.me here are my replies (somewhat similar to Lyse’s and James’)

Metadata in twts: Key=value is too complicated for non-hackers and hard to write by hand. So if there is a need then we should just use #NSFS or the alt-text file in markdown image syntax

if something is NSFWIDs besides datetime. When you edit a twt then you should preserve the datetime if location-based addressing should have any advantages over content-based addressing. If you change the timestamp the its a new post. Just like any other blog cms.

Caching, Yes all good ideas, but that is more a task for the clients not the serving of the twtxt.txt files.

Discovery: User-agent for discovery can become better. I’m working on a wrapper script in PHP, so you don’t need to go to Apaches log-files to see who fetches your feed. But for other Gemini and gopher you need to relay on something else. That could be using my webmentions for twtxt suggestion, or simply defining an email metadata field for letting a person know you follow their feed. Interesting read about why WebMetions might be a bad idea. Twtxt being much simple that a full featured IndieWeb sites, then a lot of the concerns does not apply here. But that’s the issue with any open inbox. This is hard to solve without some form of (centralized or community) spam moderation.

Support more protocols besides http/s. Yes why not, if we can make clients that merge or diffident between the same feed server by multiples URLs

Languages: If the need is big then make a separate feed. I don’t mind seeing stuff in other langues as it is low. You got translating tool if you need to know whats going on. And again when there is a need for easier switching between posting to several feeds, then it’s about building clients with a UI that makes it easy. No something that should takes up space in the format/protocol.

Emojis: I’m not sure what this is about. Do you want to use emojis as avatar in CLI clients or it just about rendering emojis?

D+D -> H3, D+3HE -> D + 3 HE Æ p (14.7MeV) + 4 He (3.7MeV) + 18.4 MeV

@movq@www.uninformativ.de Some more options:

- Summer lightning.

- Obviously aliens!11!!!1

I once saw a light show in the woods originating most likely from a disco a few kilometers away. That was also pretty crazy. There was absolutely zero sound reaching the valley I was in.

Android phone with 4GB RAM. Jenny+mutt runned in Termux. With change #tho4wpq from aeralaji mutt loading 3-5 seconds

@prologic@twtxt.net I wrote ¼ (one slash four) by which I meant “the first out of four”. twtxt.net is showing it as ¼, a single character that IMO doesn’t have that same meaning (it means 0.25). Similarly, ¾ got replaced with ¾ in another twt. It’s not a big deal. It just looks a little wrong, especially beside the 2/4 and 4/4 in my other two twts.

I read Starter Villain by John Scalzi. Enjoyable, like his other books that I’ve read. Somewhat sillier. (¾)

Simplified twtxt - I want to suggest some dogmas or commandments for twtxt, from where we can work our way back to how to implement different feature like replies/treads:

It’s a text file, so you must be able to write it by hand (ie. no app logic) and read by eye. If you edit a post you change the content not the timestamp. Otherwise it will be considered a new post.

The order of lines in a twtxt.txt must not hold any significant. The file is a container and each line an atomic piece of information. You should be able to run

sorton a twtxt.txt and it should still work.Transport protocol should not matter, as long as the file served is the same. Http and https are preferred, so it is suggested that feed served via Gopher or Gemini also provide http(s).

Do we need more commandments?

Pinellas County Running: 3.51 miles, 00:09:22 average pace, 00:32:54 duration

ugh. i miss the low humidity of az. normal run to check out milton damage. huge trees blocking the pinellas trail.

#running

Installing Devuan 3.1 and Migrating to Ceres | https://starbreaker.org/blog/tech/installing-devuan-31-migrating-ceres/index.html

Pinellas County Running: 3.14 miles, 00:08:58 average pace, 00:28:07 duration

late evening run. i don’t even recall this one.

#running

More thoughts about changes to twtxt (as if we haven’t had enough thoughts):

- There are lots of great ideas here! Is there a benefit to putting them all into one document? Seems to me this could more easily be a bunch of separate efforts that can progress at their own pace:

1a. Better and longer hashes.

1b. New possibly-controversial ideas like edit: and delete: and location-based references as an alternative to hashes.

1c. Best practices, e.g. Content-Type: text/plain; charset=utf-8

1d. Stuff already described at dev.twtxt.net that doesn’t need any changes.

We won’t know what will and won’t work until we try them. So I’m inclined to think of this as a bunch of draft ideas. Maybe later when we’ve seen it play out it could make sense to define a group of recommended twtxt extensions and give them a name.

Another reason for 1 (above) is: I like the current situation where all you need to get started is these two short and simple documents:

https://twtxt.readthedocs.io/en/latest/user/twtxtfile.html

https://twtxt.readthedocs.io/en/latest/user/discoverability.html

and everything else is an extension for anyone interested. (Deprecating non-UTC times seems reasonable to me, though.) Having a big long “twtxt v2” document seems less inviting to people looking for something simple. (@prologic@twtxt.net you mentioned an anonymous comment “you’ve ruined twtxt” and while I don’t completely agree with that commenter’s sentiment, I would feel like twtxt had lost something if it moved away from having a super-simple core.)All that being said, these are just my opinions, and I’m not doing the work of writing software or drafting proposals. Maybe I will at some point, but until then, if you’re actually implementing things, you’re in charge of what you decide to make, and I’m grateful for the work.

Probando mi internet.. 3,2,1… #Barcelona

“First world” countries problem number x:

More than 3,600 chemicals approved for food contact in packaging, kitchenware or food processing equipment have been found in humans, new peer-reviewed research has found, highlighting a little-regulated exposure risk to toxic substances.

@lyse@lyse.isobeef.org on this:

3.2 Timestamps: I feel no need to mandate UTC. Timezones are fine with me. But I could also live with this new restriction. I fail to see, though, how this change would make things any easier compared to the original format.

Exactly! If anything it will make things more complicated, no?

Good writeup, @anth@a.9srv.net! I agree to most of your points.

3.2 Timestamps: I feel no need to mandate UTC. Timezones are fine with me. But I could also live with this new restriction. I fail to see, though, how this change would make things any easier compared to the original format.

3.4 Multi-Line Twts: What exactly do you think are bad things with multi-lines?

4.1 Hash Generation: I do like the idea with with a new uuid metadata field! Any thoughts on two feeds selecting the same UUID for whatever reason? Well, the same could happen today with url.

5.1 Reply to last & 5.2 More work to backtrack: I do not understand anything you’re saying. Can you rephrase that?

8.1 Metadata should be collected up front: I generally agree, but if the uuid metadata field were a feed URL and no real UUID, there should be probably an exception to change the feed URL mid-file after relocation.

@sorenpeter@darch.dk Points 2 & 3 aren’t really applicable here in the discussion of the threading model really I’m afraid. WebMentions is completely orthogonal to the discussion. Further, no-one that uses Twtxt really uses WebMentions, whilst yarnd supports the use of WebMentions, it’s very rarely used in practise (if ever) – In fact I should just drop the feature entirely.

The use of WebSub OTOH is far more useful and is used by every single yarnd pod everywhere (no that there’s that many around these days) to subscribe to feed updates in ~near real-time without having the poll constantly.

Some more arguments for a local-based treading model over a content-based one:

The format:

(#<DATE URL>)or(@<DATE URL>)both makes sense: # as prefix is for a hashtag like we allredy got with the(#twthash)and @ as prefix denotes that this is mention of a specific post in a feed, and not just the feed in general. Using either can make implementation easier, since most clients already got this kind of filtering.Having something like

(#<DATE URL>)will also make mentions via webmetions for twtxt easier to implement, since there is no need for looking up the#twthash. This will also make it possible to make 3th part twt-mentions services.Supporting twt/webmentions will also increase discoverability as a way to know about both replies and feed mentions from feeds that you don’t follow.

Reddington Shores - Long run (part I): 3.05 miles, 00:11:32 average pace, 00:35:10 duration

slower second half. more of a walk-run due to the sun blaring and high heart rate.

#running